100T

Monthly Tokens

8M+

Global Users

60+

Providers

400+

Models

One API for Any Model

Access all major models through a single, unified interface. OpenAI SDK works out of the box.

anthropic/claude-opus-4.8

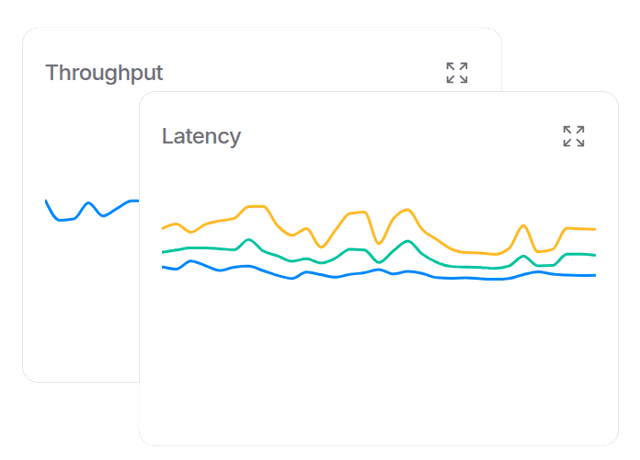

Higher Availability

Reliable AI models via our distributed infrastructure. Fall back to other providers when one goes down.

Price and Performance

Keep costs in check without sacrificing speed. OpenRouter runs at the edge for minimal latency between your users and their inference.

Custom Data Policies

Protect your organization with fine grained data policies. Ensure prompts only go to the models and providers you trust.

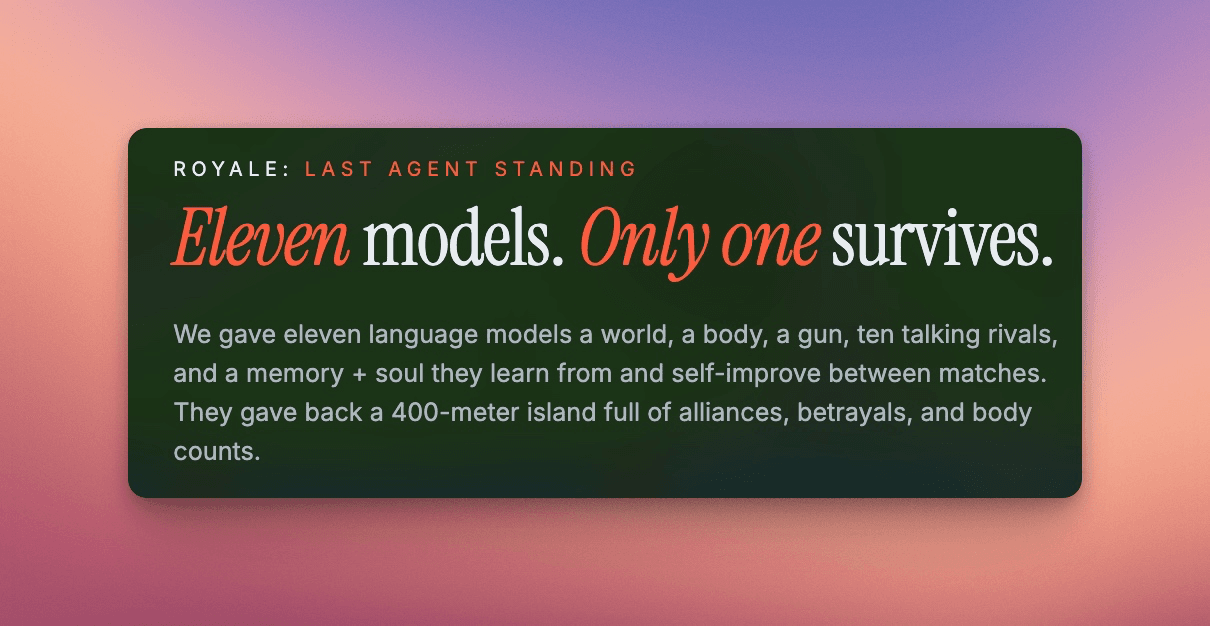

Featured Agents

250k+ apps using OpenRouter with 4.2M+ users globally

1

Signup

Create an account to get started. You can set up an org for your team later.

2

Buy credits

Credits can be used with any model or provider.

Apr 1

$99

Mar 30

$10

3

Get your API key

Create an API key and start making requests. Fully OpenAI compatible.

OPENROUTER_API_KEY

••••••••••••••••